I wanted to test how I can connect other than public data sources to my Azure Data Factory SSIS IR. Namely I wanted to be able to read files from a file share. Originally I thought I would need so called Self-Hosted Integration Runtime, but actually you can connect SSIS IR to your On-Premise data sources. If you don’t have SSIS IR, then you would need Self-Hosted IR connecting On-Premise data sources. It’s basically installs own Azure VM to your Azure environment.

I tested also connection to On-Premise SQL Server in other environment. It was quite tricky and took me a while until I found this article: https://docs.microsoft.com/en-us/sql/integration-services/lift-shift/ssis-azure-connect-with-windows-auth?view=sql-server-2017

According to it I needed to store my domain credentials into SSISDB like this: catalog.set_execution_credential @user='<your user name>’, @domain='<your domain name>’, @password='<your password>’

After that I finally got my connection to work. Of course other prerequisite was to open On-Premise firewall for connections from SSIS IR.

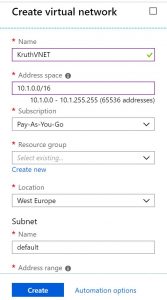

According to this document https://docs.microsoft.com/en-us/azure/data-factory/join-azure-ssis-integration-runtime-virtual-network I was supposed to create my own Azure Virtual Network and connect my SSIS IR and my Azure VM to that. Creation of VNet is straightforward task.

I used default subnet and did not touch to allocated IP address space. Note, this IP address space is internal, this is important point, because I stumbled to this later on. I also verified that my subscription had Microsoft.Batch as Resource Provider.

Next it was time to reconfigure my SSIS IR. It had to be stopped first to be able to change configuration. In third configuration page you can define to which VNet and Subnet you want your SSIS IR connect to.

Then I joined my Azure Virtual Machine to same VNet I had just created. After that there are two different IP addresses – public IP address and internal IP address, which can be found by clicking “Virtual network/subnet” name.

In addition I needed to add couple of firewall rules under Network settings to allow inbound and outbound connections from my VNet.

Note, those numbers in the beginning of the line tells the order of applied rules.

Now everything was good to go. I made first my SSIS package in Visual Studio so, that I had my file stored locally on my hard drive. After finishing development, I deployed my package into the Data Factory SSISDB.

Connecting to Azure SQL PaaS is a little bit different from normal SQL Server connection. Server name is <yourserver>.database.windows.net, e.g. kruthnesqlserver.database.windows.net. In addition you need to open second tab “Connection properties” and explicitly define your database name.

As a side note one more fact about Azure SQL Paas server, which I learned later. Nevertheless you can have many databases on the same server, those databases are not accessible by each other as in traditional SQL Server. E.g. if I have databases “StagingDatabase” and “DWDatabase” on the same server, I can’t write a query:

UPDATE X

SET X.Name = Y.Name

FROM DWDatabase.TargetTable As X

JOIN STGDatabase.SourceTable Y ON X.Id = Y.Id

It just does not work. Of course there are workarounds like using External Data Source, but it’s a little bit complicated. I ended up creating and using different database schemas for different purposes.

Back to main subject. I deployed my package to this Azure SSISDB, which was created during creation of Data Factory SSIS Integration Runtime. Then came the tricky part for me. I tried to change the source value of the file to load it from Virtual Machine. My mistake was to use public IP address of the VM. It took me a while before I figured out, that I should use the private IP of that VM.

After changing the source value of the file into the package, I logged into Data Factory and created a pipeline containing SSIS Package task pointing to my just deployed package.

Next time I’m planning to test Microsoft’s Modern Data Warehouse architecture. It doesn’t involve SSIS at all! Data is first loaded with Data Factory into blob storage / Polybase in a raw format. Data Factory does have quite many connectors already today. Data transformation is done with Azure Databricks, which is a quite new addition to Azure stack. This means mainly Python, Scala, PySpark (dialect of Python), but you can use also plain SQL. Back from graphical user interfaces to coding… At the end data is then loaded into Azure DW Gen2. So it’s more like ELTL approach.

Azure DW Gen2 was released 1.5.2018 and should be much better than Gen1, which really wasn’t viable option for real DW. At least marketing talks are quite positive about Gen2.