To celebrate my recent AWS certification, I wanted to write something related to AWS and data engineering. I have written previously about AWS Glue, so not about that this time. Then I had an idea, because microservices are nowadays a hot topic. I will investigate can I use AWS Lambda to create ETL microservice. AWS Lambda is an event-driven, serverless computing platform.

Sometimes your ETL requirement might be quite small, so you don’t want to spin up clusters, wait for starting and pay for more than what is actually needed. Lambda natively supports Java, Go, PowerShell, Node.js, C#, Python, and Ruby code. Python is quite popular language when doing code based ETL e.g. with Databricks/Spark. However, Lambda fits only for small scale ETL, because one function execution can only last up to 15 minutes.

To be honest, it was not so easy as I thought, but the end result is very compact and easy to replicate after it has been done once.

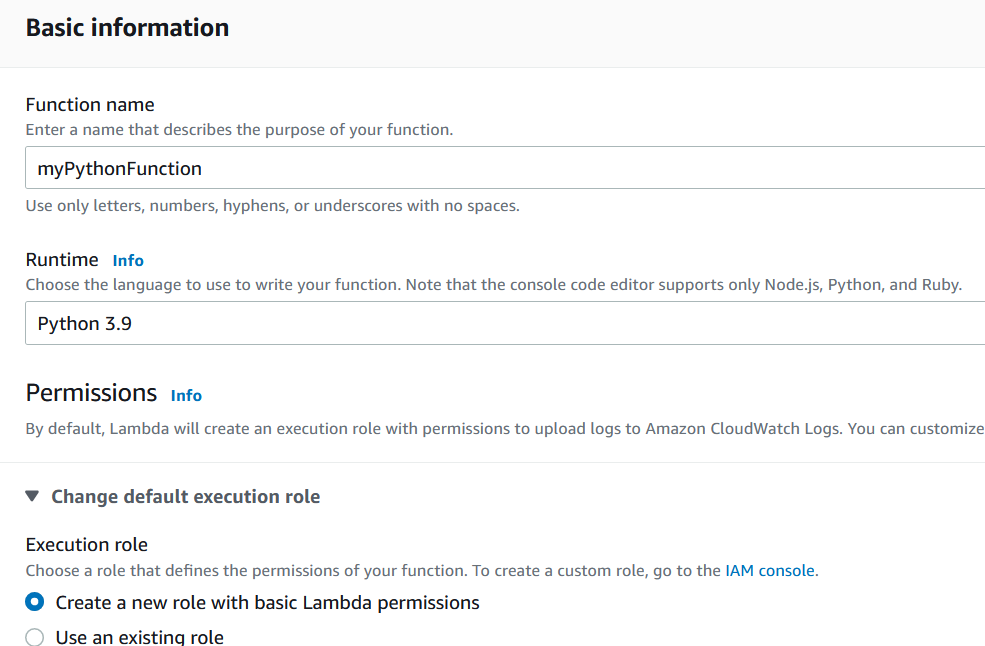

First you go from AWS Console to Lambda service and from there to Functions and Create Function. There are several options to create a new function and I selected ”Author from scratch”.

I selected the latest Python as my runtime. I had to change it to version 3.7 later on, and I will explain the reason also later on. On permission settings, I created a new role, because I didn’t have any existing Lambda roles. Note the role name for later usage. After that, you are ready to develop your code.

Actually, I did my development on my own Python editor, because it’s not easy to debug your code in Lambda editor. I only added Lambda specific parts in the Lambda editor.

I just wanted to test something simple. Read a csv-file from S3 bucket, do small changes and write it back to S3 bucket. My file had around 300 rows containing Finnish municipality inhabitant figures. My small ETL process adds 10 % to those inhabitant figures and round the result to integer. The entire code can be found below.

import json

import boto3

import pandas as pd

s3_client = boto3.client("s3")

def lambda_handler(event, context):

bucket_name = event["name"]

s3_file_name = event["key"]

s3_out = 'Out_'

s3_file_out = s3_out + s3_file_name

df=pd.read_csv(f's3://{bucket_name}/{s3_file_name}', sep=',', header=1, encoding= 'unicode_escape')

df['2020'] = df['2020']*1.1

df['2020'] = df['2020'].astype(int)

df.to_csv(f's3://{bucket_name}/{s3_file_out}')

return {

'statusCode': 200,

'body': json.dumps('Lambda rules!')

}Before I tested my code, I gave my Lambda role access to S3. It can be done in IAM. I just gave full access, because I didn’t want to fine tune access permissions in my test.

To be able to test execute your code, you need to create a test event. Remember, Lambda is event based serverless compute platform. In production use something always triggers code execution. Trigger could be e.g. new file arrival to S3 bucket or call from other application. I didn’t test triggering in my scenario, because you can also execute your code by creating a test event.

Above the code window is a test button. By clicking that first time, you need to create a test event. If everything is hard coded in your code, you don’t need to change anything. In my case, I had two custom values: name and key, which referred to bucket name and file name. E.g. bucket_name = event[”name”]. Normally these values would be provided by the trigger. I configured my test event to have correct values.

In the actual code, I wanted to use pandas library, because in my opinion it’s superior when handling Python dataframes. There was the first challenge. Code failed, giving an error of missing pandas function. Lambda doesn’t natively support pandas library.

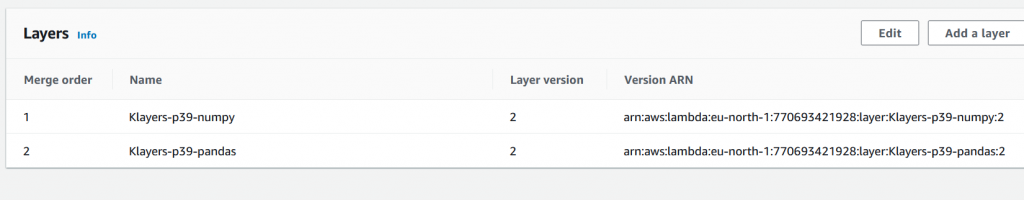

After searching, I found this article, which explained I need to use extra function layers to be able to use not natively supported libraries. First, I used ready-made layers created by the article author, which can be found here. Those layers worked very well!

Layer addition can be found from code window down under.

Layers can be added by different methods. In this case, I used ”Specify an ARN”, because I used ready-made layer.

After that, the execution of my code was successful, but I didn’t create a new file. That was a start of a real headache. It took me a while to understand what is wrong with my code. Finally I found a StackOverflow discussion where somebody mentioned casually in the comments, that pandas library uses s3fs library to communicate with S3. Unfortunately, I couldn’t find that as a ready-made layer, so I had to create it by myself. A new challenge!

I read from somewhere, that layer needs to be created on Linux with the same Python version your function is using. I don’t have Linux on my machine, so what now? Luckily, I found this article, which explained how to utilize AWS CloudShell to do that with detailed instructions. I do not copy paste the instructions here, but read the article if needed.

I created that layer into my Lambda (note correct versions). After creation, that layer still needs to be attached to your function. This time I used ”Custom layers” option. However now I got an error message of missing pandas library. What now? Luckily I realized quite soon to check the Python version of my CloudShell environment.

python --versionAnswer was Python 3.7. Next thing for me was to create a new function with the correct Python runtime version, which in my case was 3.7.

After that I got rid of error message, but still no luck. The output file was still missing. After searching, I found this article stating, that there is a mismatch between required current boto3 and s3fs libraries. So instead of installing the latest version, I downgraded my library to an older version.

pip install "s3fs<=0.4" -t . --upgradeI repeated all the steps to add that layer to Lambda and attached it to my function. Finally, my output file got created! Quite a bit of testing, but now it would be easy to repeat. First time is always the hardest.

My original file looked like this:

And my output file looked like this:

Not quite what I expected. Some part of the process couldn’t handle Scandinavian letters, but values were correct! I decided to leave that letter handling for some other time.

I would say AWS Lambda definitively could be used for small scale ETL. I have understood it’s not too expensive either. Likewise, I would assume Azure Functions work the same way. Maybe I will test that next.